Don't stress about your kids being AI native

Teach them to read, not prompt

I had two debates this week about kids and AI. Over dinner, someone said teaching kids to write is a waste of time now they’ll just be using LLMs. Another asked: what’s the product that will teach kids to be AI native?

I pushed back on both. With my kids, I’m putting most energy into old stuff: reading, writing, math. And into very human capacities like agency and curiosity, mostly built in the real world without a screen. I’m introducing them to LLMs with guardrails on, and they’re picking up the concepts. But functional AI skills aren’t where I’m investing any real energy with them.

We don’t have a clue what “AI native” even means yet. Any guess we make now will be wrong in 6 months, let alone 3-5 years. The more important and interesting question: what will kids need to thrive in a future nobody can predict, but will certainly be massively different from today?

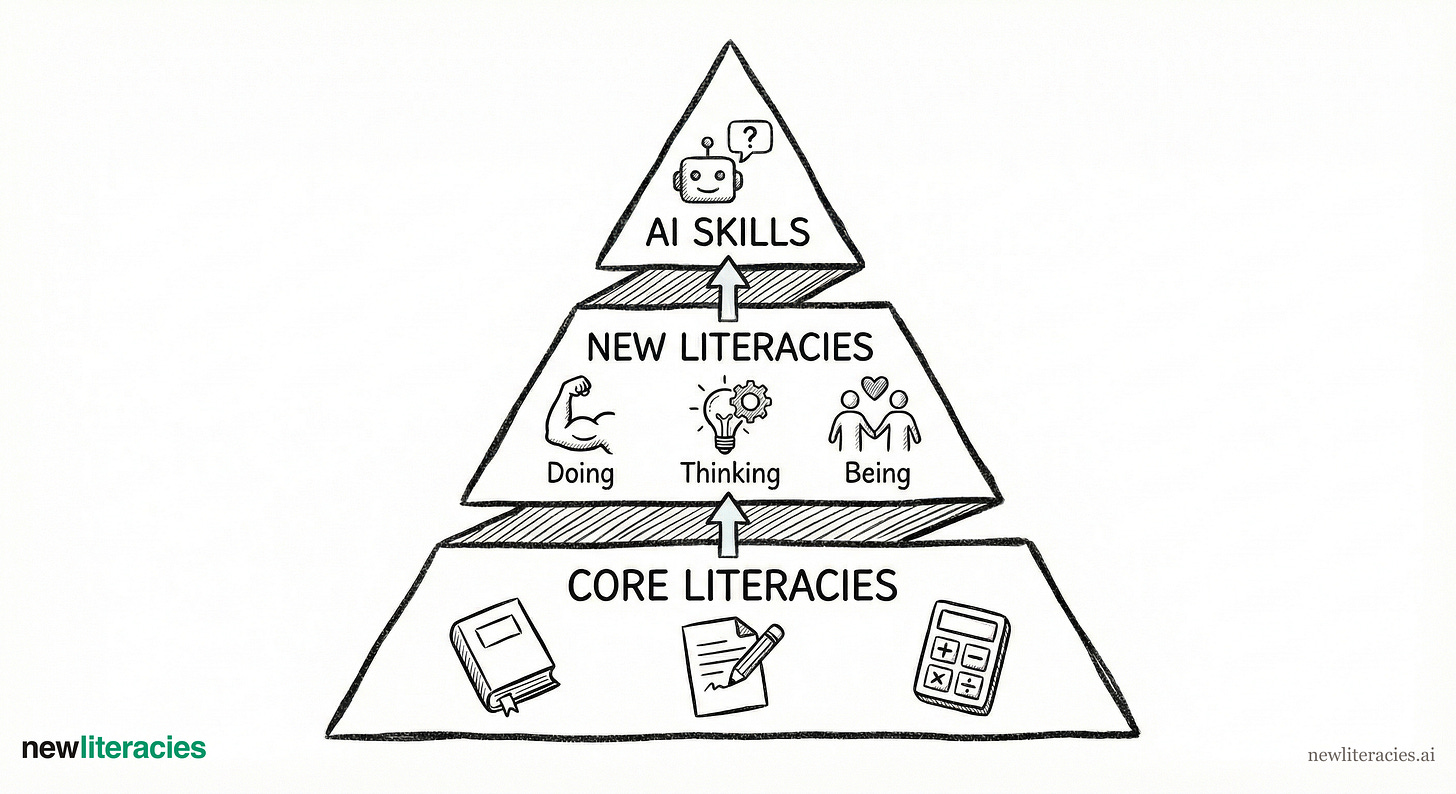

I see three layers, where learning to use AI is the least important.

Layer one: Core literacies

The most important foundation for kids in an AI world is hundreds of years old: reading, writing, math, scientific thinking.

When people ask me about kids and AI, they expect something new and futuristic. I start with something ancient. Reading, writing, and structured thinking are literally the language of LLMs. Every interaction with AI is language. A kid who reads well, writes clearly, and can think through problems is going to be better at using whatever AI shows up next year, or in ten years. Math builds the logical thinking you need to evaluate whether AI output actually makes sense. It’s also the backbone of science, engineering, and pretty much everything quantitative. If anything, AI raises the bar on all of these.

I hear the counterargument: “kids don’t need to learn to write anymore, AI will write for them.” That’s roughly like saying kids don’t need math facts because calculators exist. Writing is thinking. It’s how you organize ideas, figure out what you believe, stress-test your own reasoning. (Side note: verbal expression might overtake writing in some contexts, but the underlying skill is the same—structuring and communicating your thinking clearly).

Everyone’s talking about Alpha School as the most “AI-native” school in America. It’s an exciting model, and the foundation of their program is actually core literacies: reading, writing, math. They literally strive for perfect scores on standardized tests. The AI drives personalization, which accelerates progress. The substance is ancient (see: Lindy).

At ClassDojo, our first AI learning product is Sparks, focused on learning to read. And at home, that’s a huge focus too. Ms. 8 writes stories for fun. Ms. 10 devours books. We’ve recently started Beast Academy for supplemental math. I don’t frame these as “skills for AI”. They’re just the foundation for being a capable human. AI makes these skills more valuable, not less.

Layer two: New literacies

This layer needs more attention: the human capacities that won’t expire, that get more important with AI, and that you can actually train. I’m calling them new literacies.

Doing

Agency. Acting independently, making choices, driving outcomes. As AI does more and more for you, the instinct that you can just do things becomes even more critical.

Persistence. Sticking with hard things when it would be easier to quit. AI makes almost everything much easier. The edge goes to people who can push through frustration and keep going.

Adaptability. Learning, unlearning, and learning again. The pace of change isn’t slowing down. Kids who can let go of what worked yesterday and pick up what works tomorrow have a massive advantage.

Thinking

Curiosity. Pulling on the thread. AI is extraordinary at answering questions. You still need to ask them.

Creativity. Making things that didn’t exist, because you wanted them to. AI is a massive accelerant here. But the impulse to create from nothing has to start with the kid.

Judgment. Making good calls about what’s true, what’s good, what matters. AI-generated content is everywhere already. Figuring out what to trust is only going to get harder.

Being

Connection. Knowing others and being known. The more time we spend talking to machines, the more real human relationships matter.

Purpose. Knowing who you are and why it matters. AI can optimize any path. Choosing which path to walk is human.

These are all trainable. They’re also surprisingly easy to squash. Overprotect your kid and you kill agency. Park them in front of passive screens all day, and they lose curiosity. Solve every problem for them and persistence dies. Schools and parents do this every day, usually with good intentions.

I’ve written deeper explorations of each of these at newliteracies.ai.

Layer three: Functional skills

This is where you find most of the popular conversation about kids and AI. “Teach kids to prompt.” “Build KidGPT.” “Get them AI native.”

I get why. It’s tangible, you can vibe a product around it, and a kid using LLMs today can do things that feel like magic. It’s tempting to start here, as a parent and as a builder.

But this layer has the shortest half-life. Think how fast these tools move. The skills for using AI effectively in February 2026 are wildly different from even 3 months ago. Prompt engineering—supposedly the hot new skill—is already fading as models get better at figuring out what you mean. Now we’re talking about harness engineering. Whatever functional skill we teach today will be irrelevant in a year, maybe less.

I’m not saying these skills are irrelevant for kids. I’m introducing mine to LLMs, and built a tool for curiosity for them last year. But as a parent, functional skills are the last layer I’m investing in, not the first. A kid who reads well, thinks clearly, and can push through difficulty will figure out whatever tools exist in 2030. A kid who can prompt ChatGPT but can’t write a coherent paragraph is in real trouble.

Why the ordering matters

I think of it as a stack. Core literacies at the base, new literacies in the middle, functional skills on top. Each layer depends on the one below. Good judgment requires clear thinking underneath it. And using AI tools well requires curiosity and the persistence to actually figure them out.

Most of the conversation I hear about kids and AI starts at the top of the stack and stays there. I think that’s backwards.

What I’m exploring

This framework is shaped by my biases. I have young kids (10, 8, 3), and I work in this space professionally, both of which probably create blind spots. The concept of “new literacies” isn’t gospel; maybe I’m missing important capacities, or grouping them wrong. But I’m pretty confident about the ordering: foundations first, human capacities second, tool-specific skills last.

There are certainly products that should exist here but don’t, particularly around training new literacies like agency, adaptability, and decision-making.

But the most useful things parents can do right now are probably pretty boring: read with your kids, let them struggle with hard things, foster their curiosity instead of answering every question. The AI tools will change, and kids pick those up fast. The question is whether they’ll have the foundation to use them well.

I love this! We have a 10-yo and 15-yo. Our oldest doesn't use AI by choice (she's an artist with strong opinions about copyright and creativity!) She's one of the few in her HS not using AI for her school work. I support her choice and remind her that she is building much stronger cognitive skills. It will be easier to master AI tools later in life than it will be to make up for any lost cognitive development.